Wind Turbine Blades: From Point Estimates to Full Uncertainty

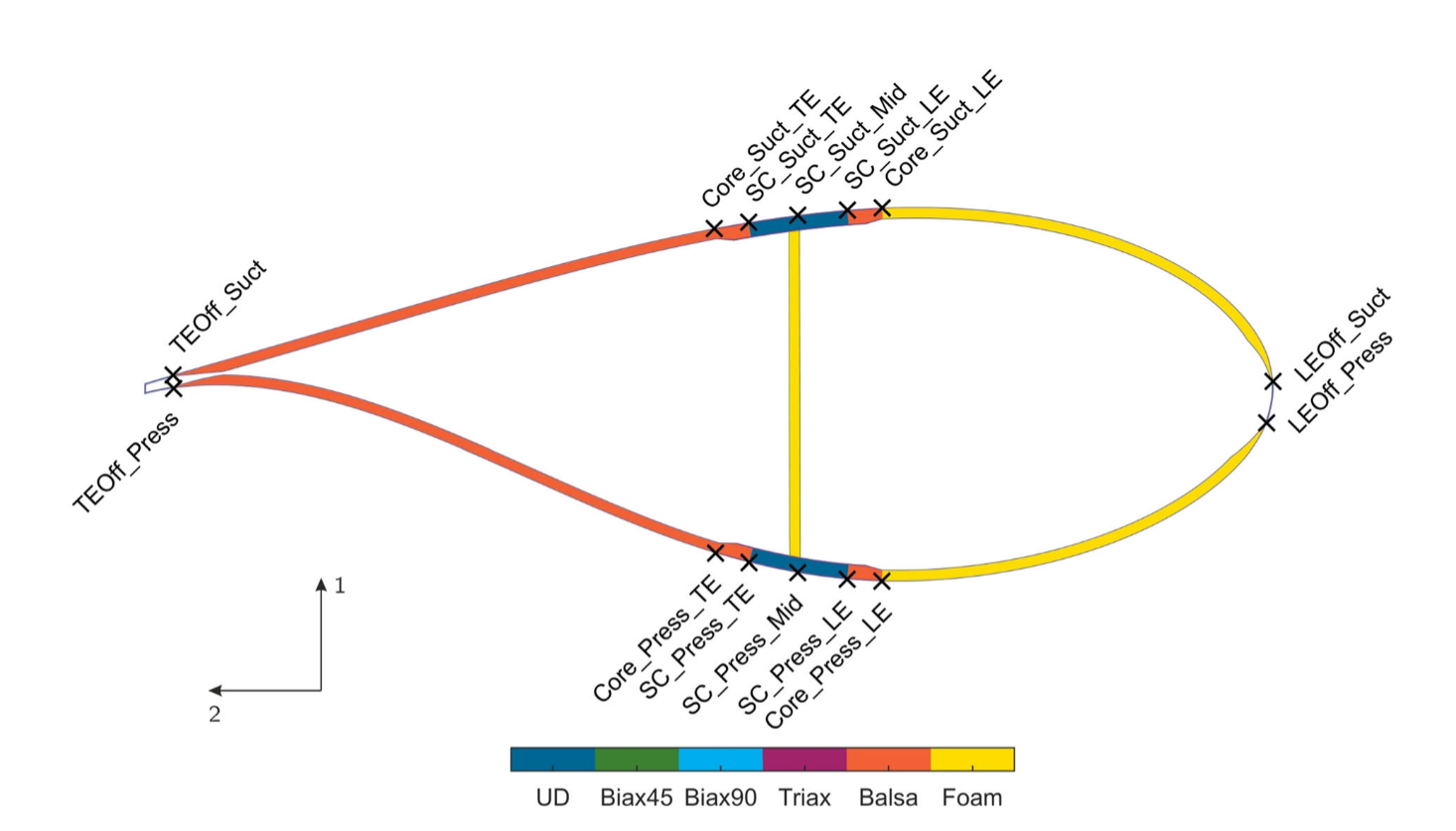

Wind turbine blades are safety-critical structures exposed to extreme loads. Manufacturing deviations — even within allowed tolerances — cause mismatches between the designed blade and the one that rolls off the production line. Operators want to know the actual internal properties (material parameters, layup geometry), but these can’t be measured directly. The standard workaround: vibrate the blade, measure how it oscillates, and solve an inverse problem backward to the structural parameters.

I collaborated with wind energy researchers at Leibniz University Hannover to replace their existing inverse-modeling pipeline with an invertible neural network. The old approach worked, but was slow and gave only point estimates — no indication of confidence or ambiguity. The INN produces a full probability distribution over possible structural states in a single forward pass. This revealed which parameters are tightly constrained by the measurements and which remain fundamentally ambiguous — including some non-obvious degeneracies the engineers hadn’t anticipated. The result: faster inference, plus a clear picture of what you actually know versus what you’re guessing.

Read the paper in Wind Energy (open access)

Hair Reconstruction: From Studio Rigs to Smartphone Selfies

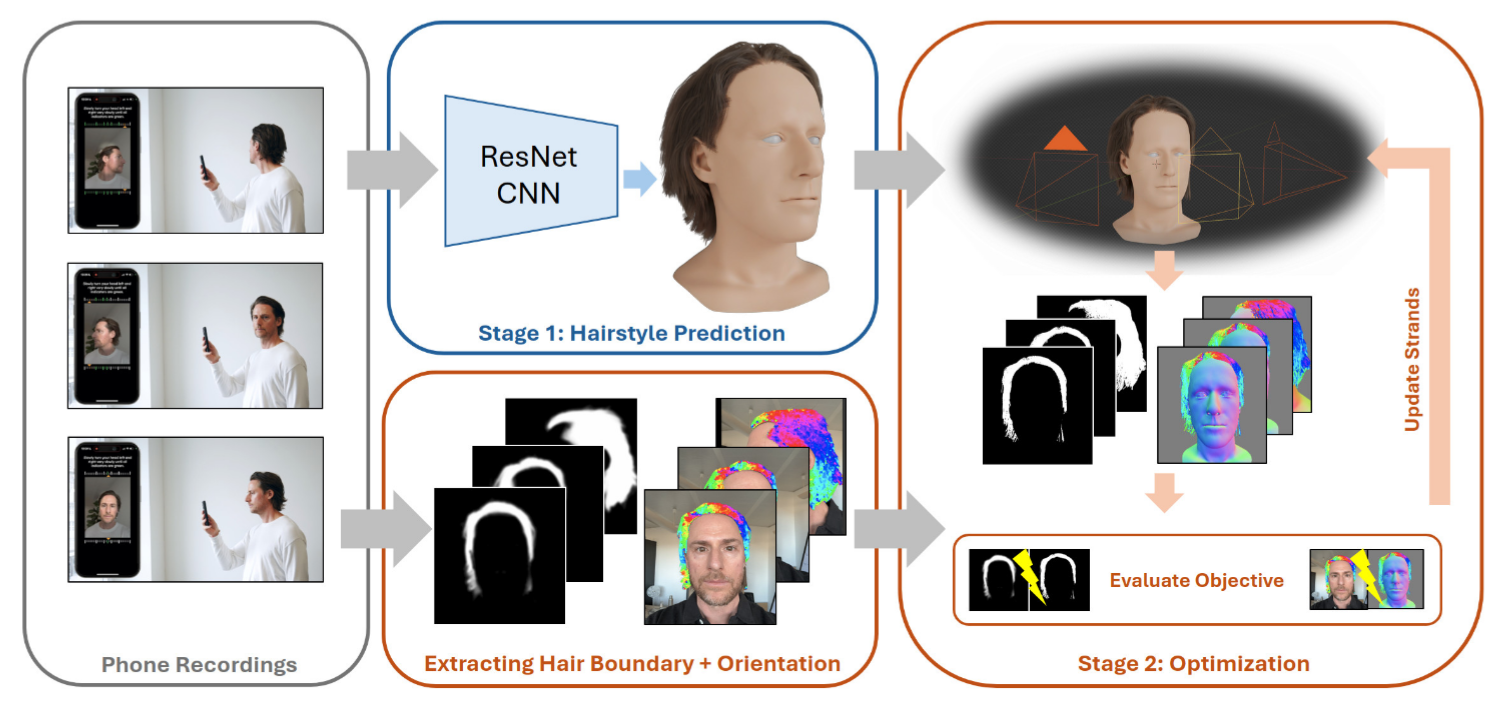

Strand-based hair is the gold standard for realistic characters in games, film, and VR. However, capturing it traditionally required expensive multi-camera rigs and extensive artist cleanup. At my previous role, I helped build an end-to-end pipeline that works from just a handful of smartphone photos. The system runs a CNN to predict an initial hairstyle, then refines strand positions using differentiable rendering to match observed hair boundaries and orientations. A major bottleneck was training data: ground-truth strand geometry for real photos doesn’t exist. We solved this by generating a large synthetic dataset with diverse hairstyles, varying lighting, and realistic augmentations.

The reconstruction runs in about two minutes and outputs clean strand assets ready for Blender, Unreal, Unity, or other 3D tools. A key lesson from this project: for real-world robustness, a single learned model rarely cuts it. The CNN alone produces plausible but generic results—it’s the multi-stage refinement that makes individual wisps match the actual recording. This combination of learned priors with classical optimization is a pattern I’ve seen pay off repeatedly when bridging research methods to production systems.

The product is live, you can try it yourself at copresence.tech . For more technical details, see the SIGGRAPH 2025 talk .

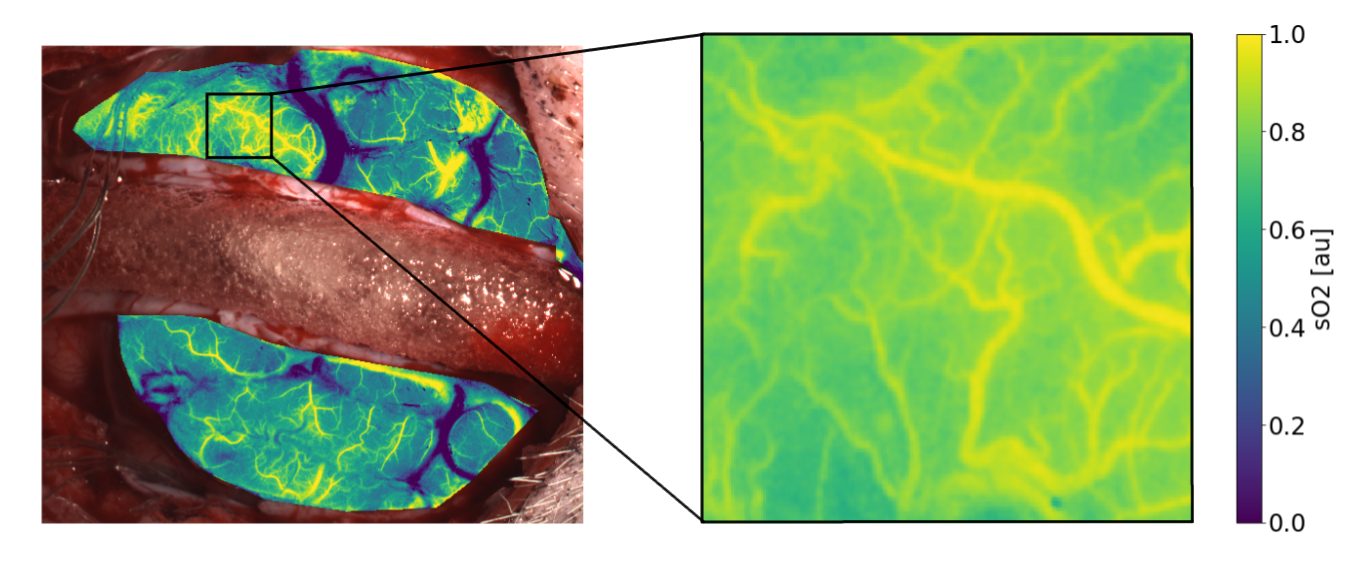

Surgical Imaging: Picking the Right Camera Before You Build It

Multispectral cameras used during surgery can estimate tissue properties like oxygenation and blood content in real-time — valuable information for surgeons. But before building or buying such a device, you need to know: can this camera configuration actually recover the parameters we care about? I worked with researchers at DKFZ (German Cancer Research Center) to answer this question using Bayesian deep learning. Instead of predicting a single value, the model outputs a full probability distribution over possible tissue states, letting us quantify not just error, but fundamental ambiguity.

The key finding: some camera setups simply cannot recover the relevant tissue parameters — the posterior distribution is so wide that the estimate is nearly meaningless, regardless of how good your algorithm is. More surprisingly, a purpose-built 3-band medical camera performed comparably to a 8-band research system costing an order of magnitude more. This reframes camera selection from “which has the lowest error on a benchmark” to “which configurations are fundamentally viable” — a question worth answering before committing to hardware.

Read the paper in International Journal of Computer Assisted Radiology and Surgery

Springer (DOI)

· arXiv (open access)